It ain’t simple keeping a camera functioning properly in orbit around Mars

In doing my normal exploration through the monthly download of new images from the high resolution camera on Mars Reconnaissance Orbiter (MRO), the last to occur near the end of February, I came across a slew of 49 images, each labeled as an “ADC Settings Test,” each covering a completely different location with no obvious single object of study, almost as if they were taken in a wildly random manner.

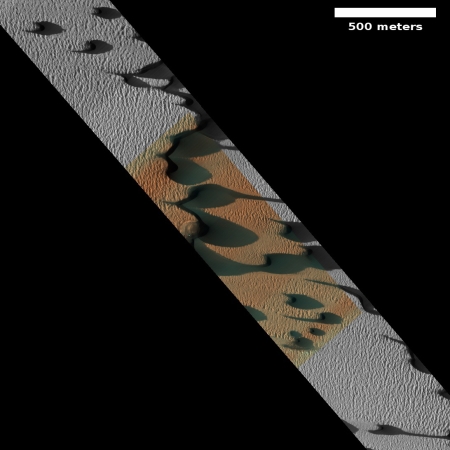

The image to the right, cropped and reduced to post here, is a typical example. It shows the mega dunes located near the end of the canyon Chasma Boreale that cuts a giant slash into the Martian north polar ice cap, almost cutting off one third of the icecap.

The black areas are shadows, long because being at the high latitude of 84 degrees the Sun never gets very high in the sky, even though this image was taken just before mid-summer, when the Sun was at its highest.

I was puzzled why these images were being taken, and contacted Ari Espinoza, the media rep for the high resolution camera, to ask if he could put me in touch with a scientist who could provide an explanation. He in turn suggested I contact Shane Byrne of the Lunar and Planetary Lab University of Arizona, who coincidentally I had already spoken with several times before in connection with the annual summer avalanche season at the Martian north pole.

Dr. Byrne first suggested I read this abstract [pdf], written for the 2018 Lunar and Planetary Science conference by the camera’s science team. In it they outline two issues with the camera, one blurred images and the second an increasing number of bad pixels occurring in images over time.

The first problem has since been solved. To preserve battery life — another long term problem that they have to deal with — they had adjusted the orbiter’s orbit slightly to get more sunlight and stopped warming the camera during the night periods. “That had the unfortunate effect of changing the camera’s focus,” explained Byrne. “Since we understand that now, we do warm-ups before taking the images and that fixed the blurring problem.”

The other problem however remains, and these ADC test images are an effort to fix it.

Since the first orbital missions in the 1960s space engineers have had to deal with a phenomenon they dub bitflips, where for a variety of reasons a binary data point flips from 0 to 1, or visa versa. These can happen randomly, and are mostly thought to occur due to the high radiation environment of space. For example, a cosmic ray might hit a computer’s memory chip and flip a bit.

Most space equipment is well hardened to protect it from these bitflips, but they still happen periodically. On MRO they found they could mitigate the issue by warming the camera (which is one of the reasons they periodically take random unrequested images). By taking a picture they can raise the camera’s temperature a measurable amount, thereby regulating it.

The concern is that the problem has been getting worse with time, requiring them to warm the camera an additional 2.7 degrees Fahrenheit per year. “Right now the temperature is way higher than anything we ever thought we’d run the camera at,” notes Byrne. “Way higher than the people who built the camera are comfortable with.”

Since they can’t keep raising the camera’s temperature ad infinitum, the ADC settings test images were taken as an attempt to find a different solution that would not require heating the camera. ADC stands for Analog-to-Digital-Conversion. This is the software that changes the analog photon data received by each pixel and converts it to a digital number. They theorize that they might be able to change how the software converts the waveform to digital information in such a way to reduce the number of bitflips, without affecting the overall data itself.

At this moment however they do not yet have an answer, having just taken the images. “We still have to analyze the data,” explains Byrne. They also want to do more tests. If all goes well they hope to complete this work within a year.

This story, one of many faced every day by space engineers, illustrates the difficulties of building and operating a spacecraft millions of miles away. In concept the technology might be relatively simple. In practice the engineering is incredibly complex, and great care must be done not only to keep things running properly, but also to make sure the data obtained is understandable and reliable.

Think about this the next time you hear someone nonchalantly wax poetic about how they are only a few years away from mining the Moon and the asteroids or colonizing Mars. It will help you separate the wheat from the chaff.

We will eventually do all these things, but we won’t do it by underestimating the challenge.

On Christmas Eve 1968 three Americans became the first humans to visit another world. What they did to celebrate was unexpected and profound, and will be remembered throughout all human history. Genesis: the Story of Apollo 8, Robert Zimmerman's classic history of humanity's first journey to another world, tells that story, and it is now available as both an ebook and an audiobook, both with a foreword by Valerie Anders and a new introduction by Robert Zimmerman.

The print edition can be purchased at Amazon or from any other book seller. If you want an autographed copy the price is $60 for the hardback and $45 for the paperback, plus $8 shipping for each. Go here for purchasing details. The ebook is available everywhere for $5.99 (before discount) at amazon, or direct from my ebook publisher, ebookit. If you buy it from ebookit you don't support the big tech companies and the author gets a bigger cut much sooner.

The audiobook is also available at all these vendors, and is also free with a 30-day trial membership to Audible.

"Not simply about one mission, [Genesis] is also the history of America's quest for the moon... Zimmerman has done a masterful job of tying disparate events together into a solid account of one of America's greatest human triumphs."--San Antonio Express-News

In doing my normal exploration through the monthly download of new images from the high resolution camera on Mars Reconnaissance Orbiter (MRO), the last to occur near the end of February, I came across a slew of 49 images, each labeled as an “ADC Settings Test,” each covering a completely different location with no obvious single object of study, almost as if they were taken in a wildly random manner.

The image to the right, cropped and reduced to post here, is a typical example. It shows the mega dunes located near the end of the canyon Chasma Boreale that cuts a giant slash into the Martian north polar ice cap, almost cutting off one third of the icecap.

The black areas are shadows, long because being at the high latitude of 84 degrees the Sun never gets very high in the sky, even though this image was taken just before mid-summer, when the Sun was at its highest.

I was puzzled why these images were being taken, and contacted Ari Espinoza, the media rep for the high resolution camera, to ask if he could put me in touch with a scientist who could provide an explanation. He in turn suggested I contact Shane Byrne of the Lunar and Planetary Lab University of Arizona, who coincidentally I had already spoken with several times before in connection with the annual summer avalanche season at the Martian north pole.

Dr. Byrne first suggested I read this abstract [pdf], written for the 2018 Lunar and Planetary Science conference by the camera’s science team. In it they outline two issues with the camera, one blurred images and the second an increasing number of bad pixels occurring in images over time.

The first problem has since been solved. To preserve battery life — another long term problem that they have to deal with — they had adjusted the orbiter’s orbit slightly to get more sunlight and stopped warming the camera during the night periods. “That had the unfortunate effect of changing the camera’s focus,” explained Byrne. “Since we understand that now, we do warm-ups before taking the images and that fixed the blurring problem.”

The other problem however remains, and these ADC test images are an effort to fix it.

Since the first orbital missions in the 1960s space engineers have had to deal with a phenomenon they dub bitflips, where for a variety of reasons a binary data point flips from 0 to 1, or visa versa. These can happen randomly, and are mostly thought to occur due to the high radiation environment of space. For example, a cosmic ray might hit a computer’s memory chip and flip a bit.

Most space equipment is well hardened to protect it from these bitflips, but they still happen periodically. On MRO they found they could mitigate the issue by warming the camera (which is one of the reasons they periodically take random unrequested images). By taking a picture they can raise the camera’s temperature a measurable amount, thereby regulating it.

The concern is that the problem has been getting worse with time, requiring them to warm the camera an additional 2.7 degrees Fahrenheit per year. “Right now the temperature is way higher than anything we ever thought we’d run the camera at,” notes Byrne. “Way higher than the people who built the camera are comfortable with.”

Since they can’t keep raising the camera’s temperature ad infinitum, the ADC settings test images were taken as an attempt to find a different solution that would not require heating the camera. ADC stands for Analog-to-Digital-Conversion. This is the software that changes the analog photon data received by each pixel and converts it to a digital number. They theorize that they might be able to change how the software converts the waveform to digital information in such a way to reduce the number of bitflips, without affecting the overall data itself.

At this moment however they do not yet have an answer, having just taken the images. “We still have to analyze the data,” explains Byrne. They also want to do more tests. If all goes well they hope to complete this work within a year.

This story, one of many faced every day by space engineers, illustrates the difficulties of building and operating a spacecraft millions of miles away. In concept the technology might be relatively simple. In practice the engineering is incredibly complex, and great care must be done not only to keep things running properly, but also to make sure the data obtained is understandable and reliable.

Think about this the next time you hear someone nonchalantly wax poetic about how they are only a few years away from mining the Moon and the asteroids or colonizing Mars. It will help you separate the wheat from the chaff.

We will eventually do all these things, but we won’t do it by underestimating the challenge.

On Christmas Eve 1968 three Americans became the first humans to visit another world. What they did to celebrate was unexpected and profound, and will be remembered throughout all human history. Genesis: the Story of Apollo 8, Robert Zimmerman's classic history of humanity's first journey to another world, tells that story, and it is now available as both an ebook and an audiobook, both with a foreword by Valerie Anders and a new introduction by Robert Zimmerman.

The print edition can be purchased at Amazon or from any other book seller. If you want an autographed copy the price is $60 for the hardback and $45 for the paperback, plus $8 shipping for each. Go here for purchasing details. The ebook is available everywhere for $5.99 (before discount) at amazon, or direct from my ebook publisher, ebookit. If you buy it from ebookit you don't support the big tech companies and the author gets a bigger cut much sooner.

The audiobook is also available at all these vendors, and is also free with a 30-day trial membership to Audible.

"Not simply about one mission, [Genesis] is also the history of America's quest for the moon... Zimmerman has done a masterful job of tying disparate events together into a solid account of one of America's greatest human triumphs."--San Antonio Express-News

Bob: SEUs, or Single Event Upsets (aka bit flips) are indeed radiation artifacts. Sadly however shielding does little to mitigate them as there is a lot of very high energy radiation in space which will go right through most any shielding. For example, the highest energy cosmic rays pack the energy of a major league fastball in a SINGLE PROTON!!!

The only way to mitigate these is through a fault tolerant architecture. Such systems use error detection and correction on all of their internal memories and data path circuits, but to fully mitigate this problem you need a redundant architecture that employs 2 or more parallel circuits (for space it’s typically trimode, or what I like to call RAMA logic : ) and the system runs 3 separate copies of a program and compares and votes on each operation. I read an article in Aviation Week and Space Technology back in the 1980s that described the Space Shuttle flight control system, and that architecture used FIVE computers in parallel with a voting algorithm that would operate down to a two computer configuration with metrics to decide which was “right” in the event of a discrepancy. Very neat stuff!!!

You can’t do this with cameras, but you can take 2 consecutive frames of the same target and screen out bit flips by deleting pixels that aren’t in both images. I guess sensor temp might help mitigate lower energy events a bit from the solar wind and such (which obviously is the most pervasive source). But I doubt this would be particularly effective.

If you’re interested in this stuff you might want to see if you can get a tour of the neutron lab at Los Alamos some time. Many semiconductor companies test their chips at this facility placing devices in a powerful neutron beam to trigger failures. This allows them to characterize SEU sensitivity in just a few hours or days that would take many months or even years in a normal lab (with 10s to 100s of test units to accumulate enough test time).

To make the point about shielding the neutron accelerator at Los Alamos is in a separate building from the devices to be tested and when they turn it on the beam is simply aimed at the cement wall which it passes right through, and there is no window!

You can learn more on this topic here as well:

https://www.intel.com/content/www/us/en/programmable/support/quality-and-reliability/seu.html

MDN: As Wayne would say, “Good stuff!”

Mr. Z.,

(yepper, I say that a bit.)

Pictures are always nice, but I do love the inside-baseball techy-stuff.

Q:

Do you use the “HiView” software at the [ https://www.uahirise.org/hiview/ ] website, to look at the pics you find?

Wayne asked, “Do you use the “HiView” software at the [ https://www.uahirise.org/hiview/ ] website, to look at the pics you find?”

Nope. I look at the images as they are posted on line, using my web browser. If I decide to post about an image, I download it and use The Gimp graphics software to do the cropping, rotating, reducing, annotating etc, necessary for posting.

The HiView software would make available higher resolution versions of the images, but require a lot of memory and computer speed, more than necessary for what I do.

I had to smile reading your article about “bit flips” caused by energetic particles. I’m retired now, but back 40-odd years ago when I first started in the IT field, I was told by a co-worker about a paper he’d seen, produced by IBM back in those days, on this very subject.

IBM studied this as even back then, they were noting bit errors mysteriously showing up in their computers (this being back in the days of the big mainframe machines) and they didn’t know why. And yes, IBM discovered that even the few cosmic ray particles that made it through the Earth’s atmosphere could cause the occasional “bit flips” in their systems. Certainly rare compared to what the MRO’s computers must endure in Mars orbit, but it happens even here on the surface of the Earth.

IBM was and remains one of the leaders on fault tolerant and error detection and correction technology.

Today’s personal computers have no protection of main memory. If there is a bit flip it will corrupt the system as a silent (undetected, the worst kind) error which may or may not crash your system or corrupt your data. Back in the day PCs supported Parity protected memory that could at least detect an error, which would cause a hard error so at least you wouldn’t suffer silent data corruption. This increases cost though and such errors are extremely rare, so it was dropped.

Server processors use ECC (Error Correction Code) protected memory that store 72-bits in memory for every 64-bits of user data. This allows for what is called SECDED operation (Single Error Correct, Double Error Detect). This means the system will detect and automatically detect any single bit flip in real time with no impact on system performance, and will detect and report a double flip too. This is the industry standard today and is quite sufficient for most terrestrial applications.

IBM however goes even a step further in their top end proprietary systems, supporting DECDED operation that can even correct two simultaneous bit flips with no impact on system operation. They really do take this stuff seriously and that is why they are still quite prevalent in Infrastructure Platforms (financial systems, power grid, military and such) because these really need to be true 24/7/365 platforms. Errors WILL happen, so if you need to keep running when they do then IBM is among the best you can get.